In a pairwise study, Med-PaLM 2 answers were preferred to physician answers across eight of nine axes considered. Both Med-PaLM and Med-PaLM 2 performed encouragingly across three datasets of consumer medical questions. Our model’s long-form answers were tested against several criteria - including scientific factuality, precision, medical consensus, reasoning, bias, and likelihood of possible harm - which were evaluated by clinicians and non-clinicians from a range of backgrounds and countries. Importantly, in this work we go beyond multiple-choice accuracy to measure and improve model capabilities in medical question answering. Med-PaLM 2 improves on this further with state of the art performance of 86.5%. Med-PaLM was the first AI system to obtain a passing score on USMLE-style questions from the MedQA dataset, with an accuracy of 67.4%. We assessed Med-PaLM and Med-PaLM 2 against a benchmark we call ‘MultiMedQA’, which combines seven question answering datasets spanning professional medical exams, medical research, and consumer queries. However, ensuring model responses are accurate, safe, and helpful has been a crucial research challenge, especially in this safety-critical domain. The generation capabilities of large language models also enable them to produce long-form answers to consumer medical questions. It takes years of training for clinicians to be able to accurately and consistently answer these questions. In short, a combination of medical comprehension, knowledge retrieval, and reasoning is necessary to do well. You are presented with a vignette containing a description of the patient, symptoms, and medications.Īnswering the question accurately requires the reader to understand symptoms, examine findings from a patient’s tests, perform complex reasoning about the likely diagnosis, and ultimately, pick the right answer for what disease, test, or treatment is most appropriate.

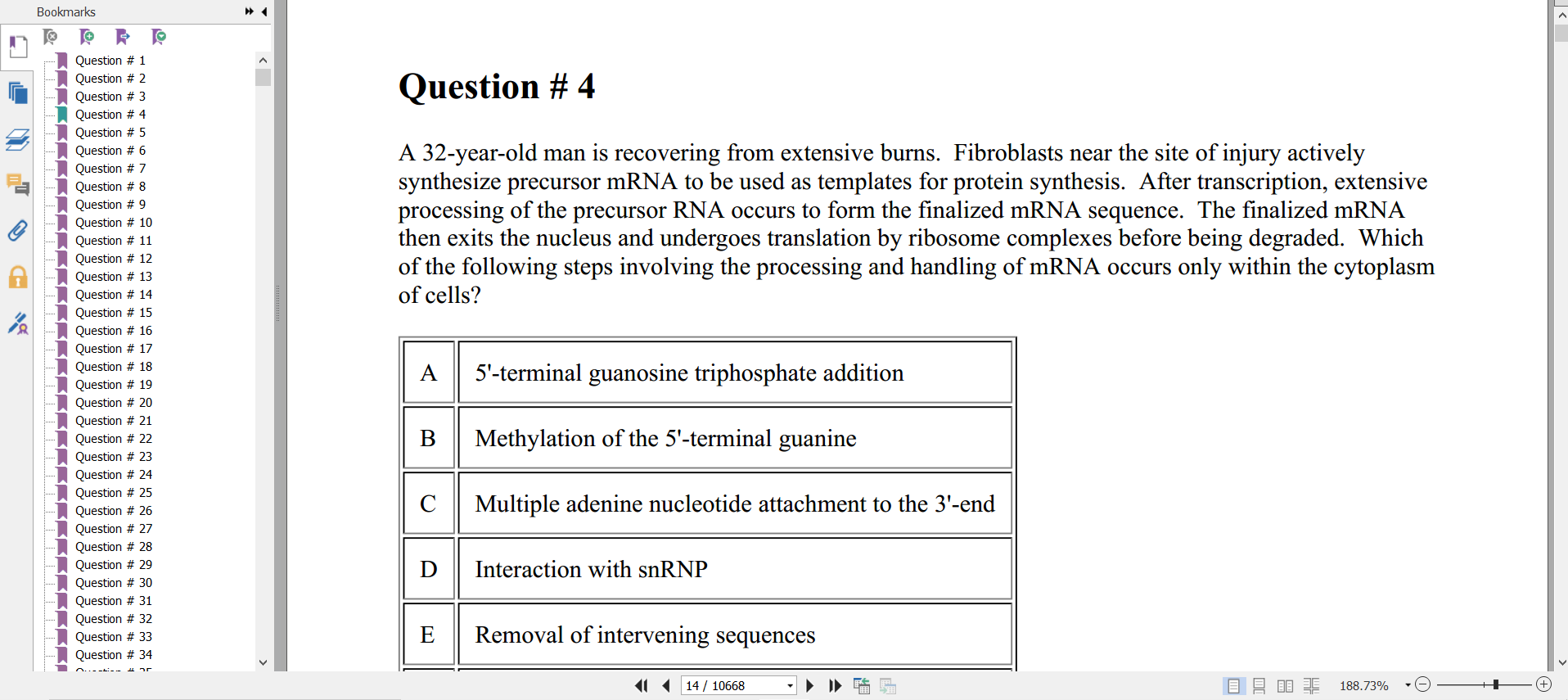

While the topic is broad, answering USMLE-style questions has recently emerged as a popular benchmark for evaluating medical question answering performance.Ībove is an example USMLE-style question. Breakthroughs such as the Transformer have enabled LLMs and other large models to scale to billions of parameters – such as PaLM – letting generative AI move beyond the limited pattern-spotting of earlier AIs and into the creation of novel expressions of content, from speech to scientific modeling.ĭeveloping AI that can answer medical questions accurately has been a long-standing challenge with several research advances over the past few decades. Progress in AI over the last decade has enabled it to play an increasingly important role in healthcare and medicine. Medical question–answering: a grand challenge for AI In the coming months, Med-PaLM 2 will also be made available to a select group of Google Cloud customers for limited testing, to explore use cases and share feedback, as we investigate safe, responsible, and meaningful ways to use this technology. Med-PaLM 2 achieves an accuracy of 86.5% on USMLE-style questions, a 19% leap over our own state of the art results from Med-PaLM. We introduced our latest model, Med-PaLM 2, at our annual health event The Check Up in Q1, 2023. Med-PaLM also generates accurate, helpful long-form answers to consumer health questions, as judged by panels of physicians and users. Our first version of Med-PaLM, preprinted in late 2022, was the first AI system to surpass the pass mark on US Medical License Exam (USMLE) style questions.

Med-PaLM harnesses the power of Google’s large language models, which we have aligned to the medical domain and evaluated using medical exams, medical research, and consumer queries. Med-PaLM is a large language model (LLM) designed to provide high quality answers to medical questions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed